Resources

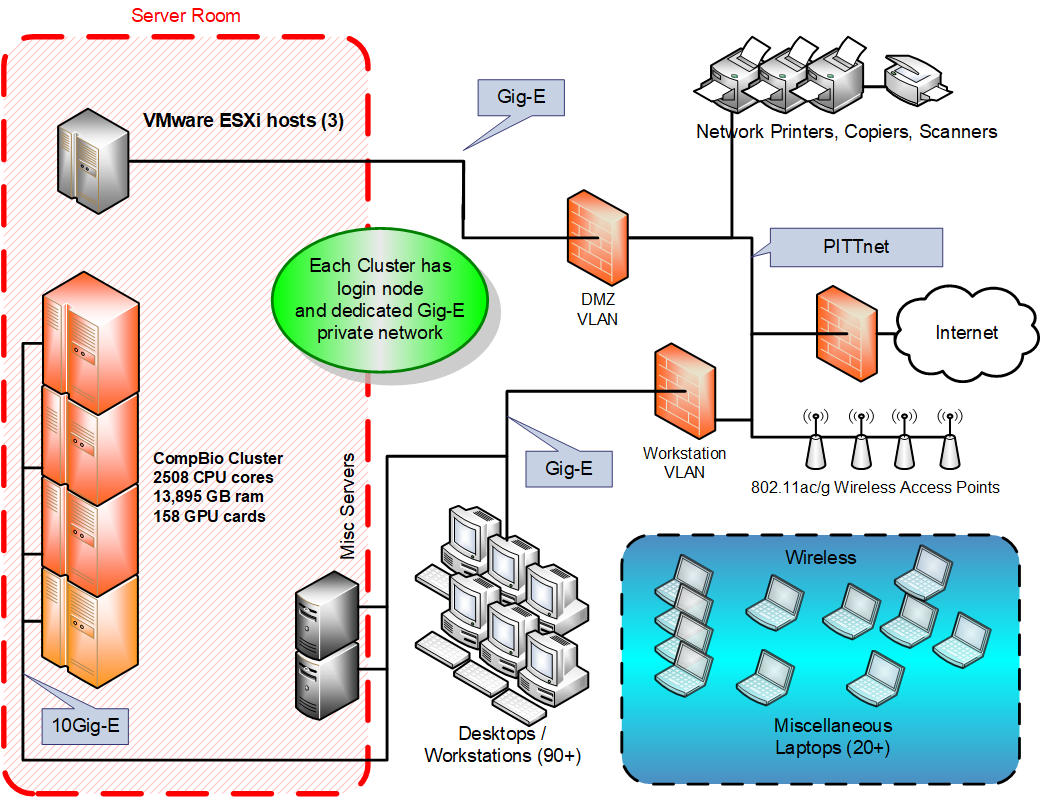

The Department of Computational Biology uses a large number of computers to conduct its research in an efficient and effective manner. These computers include high-end workstations in the offices and a number of rack-mounted Linux clusters at the NOC. The clusters are for running complex simulations, models, and computations that take a long time to complete or can run in parallel across nodes.

| Cluster | Nodes * | CPU cores total | Mem total (GB) | Storage total (TB) | GPUcards |

| CPU | 56 | 1,696 | 9,876 | 338 | NA |

| GPU | 28 | 812 | 4,107 | 120 | 158 |

| Totals | 84 | 2,508 | 13,895 | 458 | 158 |

*Nodes include compute nodes only. The (3) Login and (15) Storage servers are extra.

** Storage includes only available local workspace (scratch).

GPU Computing:

In addition to the CPU cluster nodes, we added rackmount servers to house several Graphics Processing Units “GPUs”, which can be used for speeding up scientific computations. In our GPU cluster are 154 various GPUs. Currently available GPUs consist of 20 nVidia Titan X cards, 16 nVidia GTX 1080 cards, 22 nVidia GTX 1080ti cards, 66 nVidia RTX 2080Ti cards, 11 nVidia Titan X (Pascal) cards, 4 Titan V cards, 4 Titan RTX cards, 6 Tesla K40m cards, 4 Tesla V100 cards and 5 A100 cards. Each workstation runs Linux and all use nVidia CUDA software development kit (SDK). Software such as NAMD and Amber already support running on GPU hardware.

Application Servers:

The department has a VMware vSphere 6.5 cluster for running multiple application servers on 3 ESXi hosts configured for N+1 High-Availability with a vCenter server managing all three. The ESXi hosts each consist of dual 10-core Xeon Gold with 96GB of ram. The Virtual Machines are stored on a fully redundant vSAN storage array configured with both SSD and SAS drives for reliability and increased performance when needed. The virtualized environment provides for high-availability with no single point of failure.

The department also uses a number of Windows and Linux servers for sharing files, printers, and other domain functions. These servers also host several research software tools including VMD, GNM, ANM, and several network accessible databases of biological research data.

Storage:

880TB full-redundant network attached storage (NAS) for cluster. 34TB redundant vSAN storage for VMware servers. 100+TB Linux NAS for backing up linux workstations over the network. 270TB Network backup server for cluster data.